TL;DR:

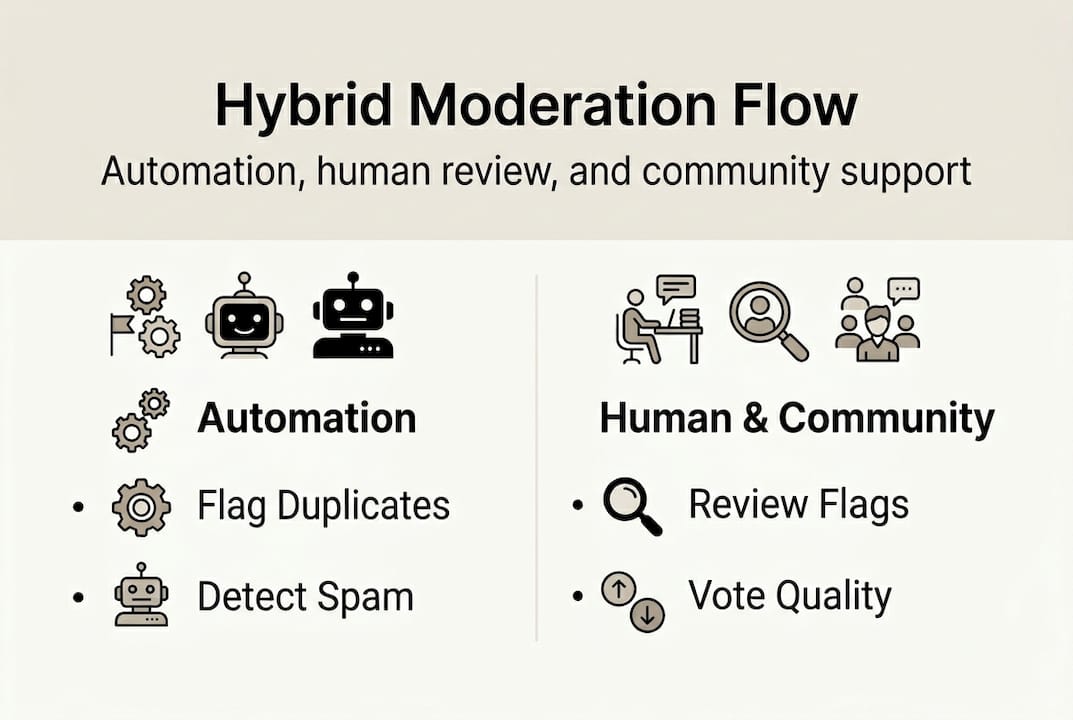

- Hybrid moderation combining AI, human oversight, and community voting ensures deal authenticity.

- Community participation and transparent moderation build trust and improve deal quality.

- Layered moderation significantly reduces fake, expired, or manipulated referral codes.

Most people assume deal-sharing platforms are cesspools of expired codes, self-promotional spam, and bots gaming the system. That assumption is outdated. A new generation of community-driven platforms has built layered moderation systems that combine artificial intelligence, volunteer oversight, and collective user judgment to surface only the most reliable discounts and referral codes. The result is something genuinely useful: a place where you can find verified deals without wading through noise. This guide breaks down exactly how that moderation works, why it holds up under pressure, and how you can both benefit from it and contribute to it.

Table of Contents

- How community moderation works in deal sharing platforms

- What makes hybrid human–AI–community moderation effective?

- Dealing with edge cases: Manipulation, spam, and fake referrals

- How you can participate and benefit from smart moderation

- Why pure automation isn't enough: Lessons from real communities

- Try moderation in action: Find and share working codes

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Hybrid moderation works best | Blending human, AI, and community tools ensures the most effective and trustworthy deal environments. |

| Active participation boosts trust | User engagement and community reporting are crucial for flagging spam and surfacing real deals. |

| Edge cases need vigilance | Manipulation and fake codes require ongoing adaptation by both moderators and members. |

| Directories and referral programs cut risks | Centralized directories lessen harmful posts while referral programs drive authentic growth. |

How community moderation works in deal sharing platforms

Deal-sharing communities face a real credibility problem from day one. Anyone can post a code. Not everyone posts a valid one. The solution most successful platforms have landed on is a hybrid moderation model that layers three distinct forces: automated tools, human moderators, and the community itself.

Automated bots handle the first line of defense. They scan new submissions for obvious red flags like duplicate codes, suspicious account ages, and known spam patterns. Reddit subreddits for deals use volunteer mods, AutoModerator bots, and community downvoting to filter content at scale. Human moderators step in for nuanced calls: Is this code genuinely new, or recycled from last month? Does this account show signs of coordinated promotion? The referral code workflow from submission to public visibility typically follows a clear path.

Here is what that journey looks like across different moderation layers:

| Moderation method | Primary role | Speed | Scalability |

|---|---|---|---|

| Automated bots | Catch duplicates and spam patterns | Instant | Very high |

| Human moderators | Judge context and intent | Minutes to hours | Limited |

| AI verification tools | Validate code links and expiry | Fast | High |

| Community voting | Surface quality, bury low-value posts | Ongoing | Very high |

The content moderation models used by top marketplaces show that no single layer is sufficient on its own. Each method compensates for the weaknesses of the others.

Key moderation rules that most quality platforms enforce include:

- No posting the same code across multiple threads within a set time window

- No self-promotion without disclosure that you benefit from the referral

- Codes must include an expiry date or be flagged as ongoing

- New accounts face a waiting period before posting is enabled

- Repeated posting of invalid codes results in temporary or permanent bans

Following the community guidelines on any platform you join is the single fastest way to build trust and avoid getting flagged. These rules exist not to restrict you but to protect the quality of the deals everyone finds.

What makes hybrid human–AI–community moderation effective?

Understanding the tools is key, but why does this blend of methods actually work in the real world? The answer comes down to what each layer does best and how they cover each other's blind spots.

AI is fast and tireless. It can scan thousands of submissions per hour and flag anomalies that no human team could catch manually. But AI lacks context. It cannot tell the difference between a code that looks suspicious because it is new and one that looks suspicious because it is fraudulent. That is where human moderators earn their role. They read intent, recognize patterns from past bad actors, and apply community-specific judgment.

Hybrid human–AI–community moderation scales effectively for deal authenticity, combining the speed of automation with the nuance of human review. Here is how the three approaches compare:

| Moderation type | Accuracy | Speed | Adaptability to new fraud |

|---|---|---|---|

| Human only | High | Slow | High |

| AI only | Medium | Very fast | Low |

| Hybrid | Very high | Fast | Very high |

The community layer adds something neither humans nor AI can replicate: real-time collective feedback. When hundreds of users upvote a working code and dozens report a dead one, that signal is immediate and reliable. It also creates social accountability. People are less likely to post garbage when they know a community of peers will call it out publicly.

"The most resilient moderation systems treat the community not as a problem to manage but as an active partner in maintaining quality."

For anyone browsing code rotation tips, understanding this structure helps you recognize which platforms are worth your time.

Pro Tip: Look for platforms that display moderator activity logs or community voting counts on each post. Transparency about how a code was verified is a strong signal that the platform takes authenticity seriously.

Dealing with edge cases: Manipulation, spam, and fake referrals

Even with robust systems, moderation is a constant arms race. Bad actors adapt, so communities must too. The most common threats are not always obvious, and understanding them makes you a smarter participant.

The top abuses that undermine deal-sharing communities are:

- Vote manipulation: Coordinated groups upvote their own codes or downvote competitors, distorting what appears at the top of feeds.

- Code leakage: Private or beta codes intended for specific users get shared publicly, violating platform terms and often expiring quickly once overused.

- Shilling: Users pretend to be neutral community members while secretly promoting codes they profit from.

- Expired or fake codes: Codes that never worked or have already expired get posted to farm engagement or clicks.

- Account farming: Multiple fake accounts created to bypass posting limits or amplify a single user's submissions.

Edge cases like vote manipulation, fake referrals, and leaked off-platform deals are among the hardest to catch because they mimic legitimate behavior. Platforms counter this with behavior analysis: tracking posting velocity, IP clustering, and account age relative to activity spikes.

The impact of strong moderation is measurable. Community directories reduced harmful content by 60% when combining real-time user reports with automated behavior monitoring. That is not a minor improvement. It fundamentally changes the experience for every honest user on the platform.

For verified referral codes, the difference between a moderated and unmoderated environment is the difference between finding a working deal in two minutes versus spending twenty minutes testing dead links.

Pro Tip: If you are submitting codes, always include the source, the expiry date, and any usage restrictions. Transparent submissions are far less likely to be flagged, and they build your reputation as a trustworthy contributor. Check out sharing codes fairly for a step-by-step breakdown.

How you can participate and benefit from smart moderation

The most vibrant communities thrive on member participation. Here is how you can take an active role and get the most out of a well-moderated deal-sharing platform.

Steps every community member should follow:

- Verify before you post: Test the code yourself before submitting it. A working code you have personally used carries far more credibility.

- Follow the rules: Each platform has specific submission guidelines. Reading them once saves you from repeated rejections.

- Report abuse when you see it: Flagging a fake code or a suspicious account is a direct contribution to community quality. Do not scroll past problems.

- Upvote legitimate deals: Your upvote is a signal. Use it deliberately to surface deals that genuinely worked for you.

- Use platform tools: Many moderated communities offer dashboards, code trackers, and calculators. Use them.

Following these steps is not just good citizenship. It directly improves the deals you discover. When everyone participates in moderation, the signal-to-noise ratio improves for the whole community. Advertisers and brands also take notice: platforms with strong moderation records attract better partnerships and more exclusive deals.

The financial case for referral programs is strong. Referrals lower customer acquisition costs by 30 to 50 percent compared to traditional ads, which is why brands invest in keeping their referral codes active and valuable. A clean, well-moderated community is where those brands want their codes to live.

Use the max savings workflow to understand how to sequence your code usage for maximum benefit. And if you want to estimate the actual dollar value of a referral program before committing, the referral ROI calculator gives you a concrete number to work with.

Why pure automation isn't enough: Lessons from real communities

Here is an uncomfortable truth that most platforms avoid saying out loud: AI moderation, no matter how sophisticated, will always miss things that humans catch instantly. Not because the technology is bad, but because context is irreplaceable.

We have seen this play out repeatedly. An automated system flags a legitimate code because it resembles a previously abused pattern. A human moderator looks at the submitter's history, sees three years of honest contributions, and approves it in thirty seconds. No algorithm gets that right consistently.

The deeper issue is cultural. Communities that rely entirely on automation tend to feel sterile. Members stop reporting abuse because it feels pointless. Engagement drops. Quality follows. The platforms that sustain genuine trust are the ones where active members feel ownership over the space.

Social accountability, the knowledge that real people are watching and judging, is a more powerful deterrent than any bot. Our fraud prevention insights consistently show that communities with visible human moderation have lower abuse rates than those running on automation alone. Technology is the foundation. Culture is what holds the building up.

Try moderation in action: Find and share working codes

Ready to put these insights into action? Here is a platform where moderation powers real savings.

LovableRewards applies everything discussed in this article: AI-based verification, community voting, and active human oversight work together to keep every listed code legitimate and current. You are not guessing whether a deal works. The platform tells you.

Whether you want to discover verified discounts across e-commerce, finance, and transportation, or submit your own referral codes to a community that will actually see them, LovableRewards gives you the tools to do both safely. Explore the free tools available to contributors and deal hunters alike, including the ROI calculator and code submission tracker. Join a community where moderation is a feature, not an afterthought.

Frequently asked questions

What are the biggest threats to deal-sharing communities?

Vote manipulation, fake referrals, and leaked deals are the top challenges that platforms must address with smart, layered moderation strategies.

How do communities verify that referral codes are authentic?

Communities use a mix of AI tools, moderator review, and user reports to validate codes. Volunteer mods, bots, and downvoting work together to filter out invalid submissions before they reach wide audiences.

Why use community directories for deals?

Community directories cut harmful content by 60% by flagging questionable posts early, making them far safer and more reliable than unmoderated forums for finding authentic referral codes.

Can referral programs really lower marketing costs?

Yes. Referral programs reduce acquisition costs by 30 to 50 percent compared to paid ads, which is why brands actively support well-moderated communities where their codes get legitimate exposure.